AI-Powered Shadowing: How the Classic Polyglot Technique Got a 2026 AI Upgrade That Makes Fluency Feel Automatic

I have a confession: I spent three years studying French before I could hold a real conversation. Three years of flashcards, grammar drills, verb conjugation tables taped above my desk — and then a waiter in Lyon spoke to me at normal speed and I froze like I had never heard the language before. My ears knew the words individually, but they arrived in a stream so fast, so melodic, so unlike anything in my textbook audio, that my brain simply refused to keep up.

That moment cracked something open. I started searching for what polyglots — the people who actually speak four, six, ten languages — do differently from the rest of us, and the same technique kept surfacing in every interview, every forum post, every dog-eared language-learning memoir I could find.

Shadowing.

What the Shadowing Technique Actually Is (and Why Polyglots Swear By It)

Shadowing is disarmingly simple in concept: you listen to a native speaker and repeat what they say in real time, trailing just a half-second behind, mimicking not only the words but the pitch, the rhythm, the pace, the breath patterns — everything that makes a language sound like that language rather than a translated version of your own. Alexander Arguelles, the legendary hyperpolyglot who popularized the method in the early 2000s, used to walk briskly through parks while shadowing audio tracks because the physical movement kept his brain engaged and his self-consciousness low, which tells you something about how different this feels from sitting at a desk with a grammar book.

The reason the shadowing technique for language learning works so well is that it trains the exact skill most methods ignore: production at native speed. When you shadow, your mouth learns the physical choreography of the language — where the tongue sits for a French "r," how Korean syllables connect without the pauses English speakers instinctively insert, the way Spanish rhythm rises and falls in patterns that no textbook rule can fully capture. Your ears learn to parse fast speech because you are forced to process it instantly. Your brain builds the neural pathways between hearing a sound and producing it, which is the literal foundation of fluency.

But there was always one brutal problem.

The Feedback Gap That Kept Shadowing Out of Reach

When you shadow alone — headphones on, audio playing, voice echoing into an empty room — you have no idea whether you are getting it right. You might be repeating every word on time but flattening the intonation into something no native speaker would recognize, or you might be swallowing consonants that matter, or your vowel sounds might be drifting toward your mother tongue without you noticing because your own ear, untrained as it is, hears what it expects to hear rather than what you actually produced.

Polyglots solved this by recording themselves, listening back obsessively, comparing waveforms manually, or working with tutors who could catch their errors — all of which required either significant technical patience or money or both. For the average learner trying to shadow Korean dramas or French podcasts or Spanish radio, the feedback loop was essentially broken. You were practicing in the dark, hoping repetition alone would fix what only awareness could address.

This is exactly the gap that AI closed in 2026. And it changed everything.

How AI Shadowing Apps Work: Real-Time Feedback That Actually Matters

The new generation of AI pronunciation practice tools — the ones that have made shadowing feel like a completely different exercise — work by analyzing your speech on three levels simultaneously, which is something no human tutor could do with the same precision and speed.

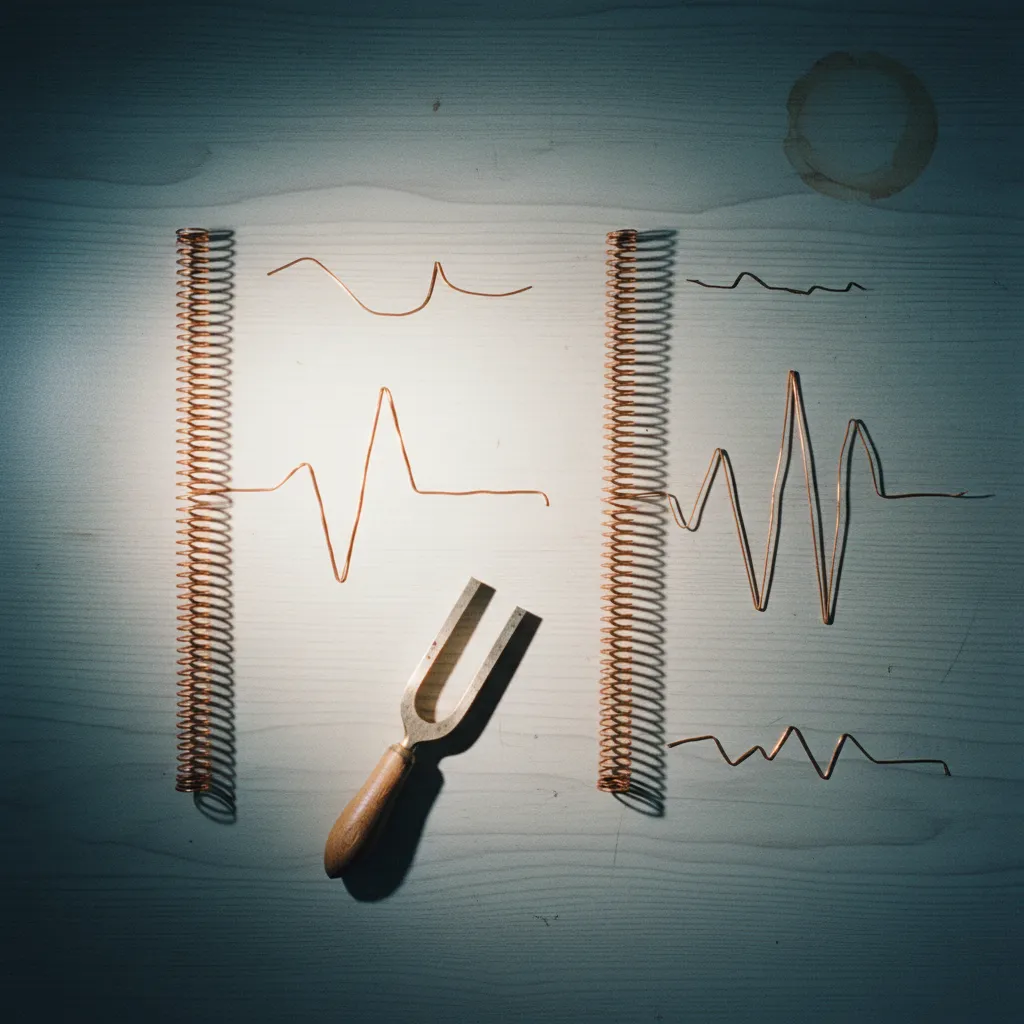

Pitch Contour Analysis

The AI maps the melodic shape of your sentence against the native speaker's, showing you in real time where your voice rises when it should fall, where you flatten a tonal distinction that carries meaning (critical in Mandarin, Vietnamese, Thai, and other tonal languages), and where your overall melody diverges from the natural pattern. This is not the simple "correct or incorrect" binary of older speech-recognition tools. It is a continuous, visual representation of how your music compares to theirs.

Rhythm and Timing Mapping

Languages have different rhythmic DNA — Japanese is mora-timed, English is stress-timed, French is syllable-timed — and the AI tracks whether you are matching the temporal structure of the language or unconsciously imposing your native rhythm onto foreign words. It notices when you linger too long on unstressed syllables in Portuguese, when you rush through the doubled consonants in Italian that native speakers hold for a fraction of a second longer, when your pauses fall in the wrong places in a German compound sentence.

Phoneme-Level Pronunciation Scoring

At the granular level, the AI isolates individual sounds and flags the ones that fall outside the acceptable range for native speech, distinguishing between errors that would cause misunderstanding and variations that are within natural tolerance — a nuance older tools completely missed, which led to the frustrating experience of being marked "wrong" for a perfectly comprehensible pronunciation.

Put these three layers together, and you get something that did not exist before: a shadowing partner that listens as carefully as the best language coach in the world but is available at 2 AM, never gets tired, never judges, and costs a fraction of private tutoring.

At LingoTalk, we have been watching this space closely because it aligns with something we deeply believe — that the best language learning happens when technology removes barriers to practice rather than replacing the human effort that makes practice meaningful.

A Framework for AI-Powered Shadowing (for Any Language)

Here is how I rebuilt my own shadowing practice once these AI tools became available, and while I am not calling this a step-by-step guide because every learner's path looks different, the progression worked so naturally that it might be useful to see the logic behind it.

Phase One: Listen First, Shadow Second

Before shadowing a passage, I listen to it three times without speaking — once for the overall meaning, once to notice the rhythm, and once to catch the sounds that feel unfamiliar or tricky in my mouth. This priming step prevents the most common shadowing mistake, which is diving into repetition before your ear has a map of what it is trying to reproduce. The AI feedback is far more useful when you already have an internal model to compare it against.

Phase Two: Shadow at 70% Speed

Most AI shadowing apps now allow speed adjustment, and starting at roughly 70% of native speed gives your mouth time to find the right positions without the panic of real-time pace. I shadow the passage five to seven times at this speed, paying attention to the AI's pitch and rhythm feedback more than the phoneme scores, because melody and timing are the architecture that everything else sits on.

Phase Three: Close the Gaps at Full Speed

Once the slowed-down version feels comfortable — and "comfortable" here means the AI's pitch contour for your voice roughly mirrors the native one, not that it is perfect — you move to full speed. This is where the real shadowing magic happens, because at native pace your conscious mind cannot micromanage every sound, and your mouth starts relying on the muscle memory you built in phase two. The AI now becomes most valuable for catching the errors that persist under pressure: the vowels that revert, the rhythm that slips, the consonant clusters that blur.

Phase Four: Free Shadow Without Text

The final phase is shadowing without subtitles, transcription, or any visual crutch — just sound in, sound out, as fast as the speaker delivers it. This is where fluency lives. The AI feedback at this stage is less about individual corrections and more about overall prosody — does your speech have the same shape, the same energy, the same natural flow as the original? When the answer starts to be yes, something has shifted in your brain that vocabulary lists and grammar exercises could never produce.

Why This Is the Most Underrated Language Learning Method of 2026

The AI language learning conversation in 2026 has been dominated by chatbots, real-time translation, and AI-generated lesson plans — all valuable, all worthy of attention. But shadowing with AI feedback is the method that directly trains the physical and neurological machinery of fluent speech in a way that conversation practice alone cannot, because conversation lets you fall back on the words and structures you already know while shadowing forces you into the patterns of native speakers whether your comfort zone approves or not.

Polyglot techniques in 2026 are not fundamentally different from what Arguelles practiced while pacing through parks — the core insight that your body must learn the language, not just your mind, has not changed. What has changed is that AI removes the loneliness and uncertainty from the process, turning shadowing from a leap of faith into a measurable, adjustable, deeply personal practice.

The research backs this up. Studies on shadowing for fluency have consistently shown improvements in listening comprehension, speaking speed, accent reduction, and — perhaps most importantly — learner confidence, because when you can hear yourself sounding closer to a native speaker, the psychological barrier to actually using the language in real life begins to dissolve.

What to Look for in an AI Shadowing App

Not all tools are equal, and a few features separate genuinely useful AI shadowing apps from gimmicky ones: real-time visual feedback (not just a score after you finish), adjustable playback speed, support for the specific language you are learning with phoneme models trained on that language rather than adapted from English, the ability to import your own audio so you can shadow content you actually care about, and — this matters more than it sounds — a clean, uncluttered interface that does not distract you during the intense focus that shadowing demands.

At LingoTalk, we are always exploring how tools like these integrate into a holistic learning journey, because shadowing is most powerful not as an isolated drill but as one part of a practice ecosystem that includes comprehension, conversation, cultural context, and the kind of patient, stacked learning that turns effort into real ability.

The Takeaway

Shadowing was already the best-kept secret in language learning. AI made it accessible to everyone — not just disciplined polyglots with the patience to self-diagnose their errors, but anyone willing to put on headphones, open an app, and speak.

The technique is simple. The AI handles the feedback. Your only job is to show up and echo.

Start with five minutes a day. Pick a speaker whose voice you enjoy. Shadow them until their rhythm starts to feel like yours. That is not a metaphor — it is the actual neurological process that produces fluency, and for the first time in the history of language learning, you can watch it happen in real time on your screen.

Your mouth already knows how to make these sounds. It just needs someone — or something — to show it the way.

Ready to speak a new language with confidence?