Your AI Language Tutor Can Now Tell When You're About to Quit: How Emotion-Aware AI Is Detecting Burnout and Saving Language Learners in 2026

"I don't think I'm bad at Spanish. I think I just... stopped caring."

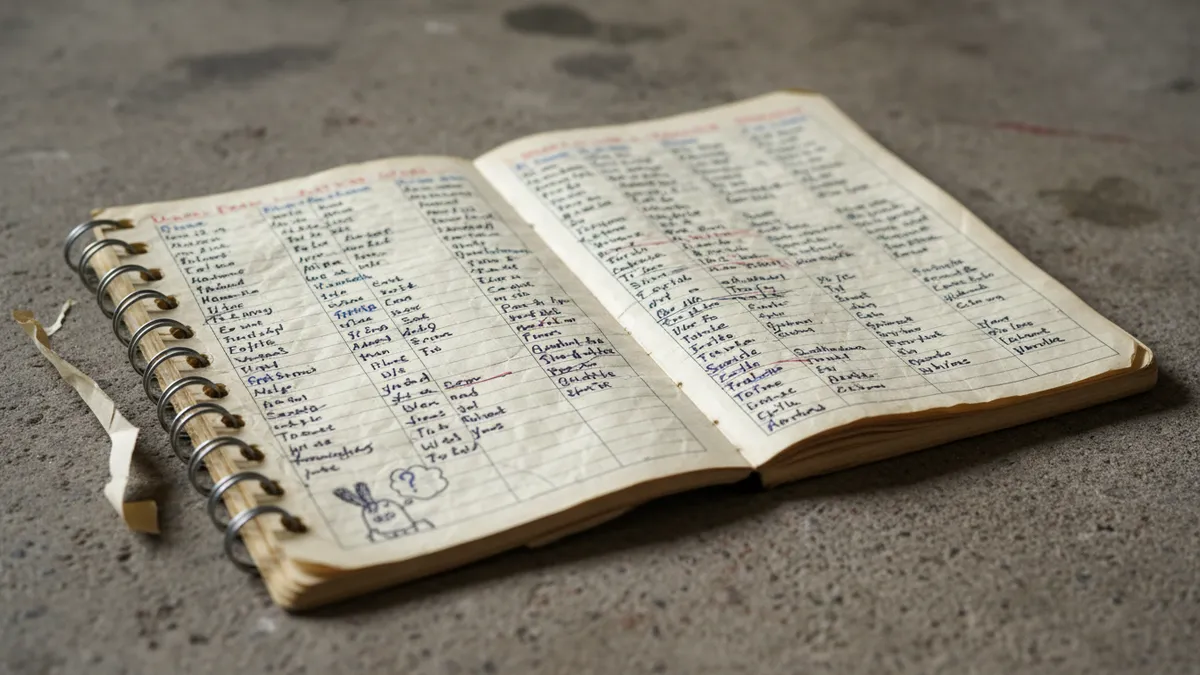

A friend said that to me over coffee last month. She'd been at it for two years. Duolingo streak, private tutor, notebook full of conjugation tables. Then one Tuesday, she didn't open the app. Wednesday either. By the following Monday, she'd deleted it.

She wasn't struggling with grammar. She was burned out. And nobody — not the app, not the tutor, not even she herself — saw it coming.

That conversation keeps replaying in my head, because in 2026, we finally have technology that could have seen it. Emotion-aware AI tutors are now analyzing the way we speak, the way we hesitate before typing, and the rhythm of our study sessions to detect language learning burnout before we consciously register it. Then they intervene. Quietly. Automatically. Before we reach for that delete button.

The Burnout Epidemic Nobody Measured Until Now

We all know the feeling. The flashcards blur together. The podcast we used to love becomes background noise. We reschedule the conversation exchange for the third time this week.

Language learning burnout isn't new. But 2026 is the year we started treating it as measurable, predictable, and — critically — preventable.

Lindsay Does Languages ran her viral "Language Reset" event earlier this year, drawing tens of thousands of learners who'd quietly plateaued or walked away entirely. The response was staggering. It proved something researchers had been whispering about for years: the dropout problem in language learning isn't primarily about method or motivation. It's about emotional exhaustion.

A 2025 meta-analysis published in Scientific Reports found that 68% of self-directed language learners experience measurable emotional fatigue within the first eighteen months. Not frustration with content. Not lack of time. Emotional fatigue — the slow, creeping erosion of the feeling that any of this matters.

We've been solving the wrong problem. We've been building better curricula when we should have been building better listeners.

What Emotion-Aware AI Actually Detects

So what does an AI tutor with emotional intelligence actually look for? Not tears. Not dramatic proclamations. The signs are quieter than that.

Much quieter.

Vocal Tone and Prosody Shifts

When we practice speaking with an AI tutor, our voice carries data we don't intend to share. A 2026 study in Nature Machine Intelligence demonstrated that transformer-based models can detect affective state shifts — frustration, disengagement, fatigue — from vocal prosody with over 89% accuracy.

What does that look like in practice? Our pitch flattens. Our response latency increases by fractions of a second. We start trailing off at the ends of sentences instead of finishing them with intention. These are patterns invisible to us in the moment but unmistakable to a system trained on millions of learner interactions.

Typing Hesitation and Micro-Pauses

For those of us who learn through text-based exercises, the keyboard tells its own story. Researchers at ETH Zurich published findings this year showing that keystroke dynamics — the tiny pauses between characters, the increase in backspacing, the longer hover time before submitting an answer — correlate strongly with emotional disengagement.

We're not talking about the learner who pauses to think about grammar. We're talking about the learner who pauses because they're staring past the screen. The AI learns to tell the difference. It takes time. It takes pattern recognition across dozens of sessions. But it learns.

Session Behavior and Engagement Decay

This is the macro view. An adaptive AI language app doesn't just look at what's happening inside a session — it tracks the shape of our engagement over weeks.

Are sessions getting shorter? Are we skipping the speaking exercises we used to choose first? Are we completing lessons but scoring lower on material we've previously mastered? Have we stopped exploring new content entirely, mechanically reviewing the same safe deck of vocabulary?

Alone, each signal is noise. Together, they form a portrait of someone drifting toward the exit.

The Intervention: What Happens When AI Spots the Warning Signs

"Okay, so the AI knows I'm burning out. Then what?"

Fair question. Detection without response is just surveillance. The real breakthrough in 2026 isn't that systems can identify language study burnout signs — it's what they do about it.

The Quiet Pivot

The most effective emotion-aware AI tutors don't announce that they've noticed a problem. They don't flash a popup that says "You seem tired!" Research from Carnegie Mellon's Human-Computer Interaction Institute confirms what we'd probably guess: explicit emotional labeling from a machine feels patronizing. It backfires.

Instead, the best systems execute what researchers call a "re-engagement pivot." The lesson shifts. Subtly. The grammar drill becomes a cultural storytelling module. The vocabulary test transforms into a music-based listening exercise. The difficulty curve flattens just enough to restore the feeling of competence without being obvious about it.

At LingoTalk, this is a principle we think about constantly — the idea that the best adaptive experience is one the learner never consciously notices adapting. The lesson just feels right that day, even on a bad day. Especially on a bad day.

Restoring Autonomy, Not Removing It

Here's where it gets nuanced. One of the most robust findings from self-determination theory — the psychological framework underpinning most modern language learning motivation research — is that autonomy is non-negotiable. The moment we feel controlled, intrinsic motivation collapses.

So the smartest AI systems don't force a change. They offer lateral choices. "Want to try something different today?" They surface content aligned with the learner's interests rather than the syllabus. They might suggest a shorter session and frame it as a strategic choice rather than a concession.

The goal isn't to prevent the learner from stopping. It's to make sure that if we stop, it's a genuine decision — not the result of invisible emotional fatigue we never had the chance to address.

Why Generic Burnout Advice Fails Language Learners

"Just take a break."

We've all heard it. We've all given it. And it's not wrong, exactly. But it's incomplete in a way that matters enormously for language learning fatigue recovery.

Here's the problem: language skills decay during breaks. We know this. We feel this. So "take a break" carries an implicit punishment — come back in two weeks and discover you've forgotten half of what you worked for. That awareness makes breaks stressful. And stressful breaks aren't really breaks.

Emotion-aware AI offers a third option between "push through" and "walk away." It creates what we might call an active recovery state. The intensity drops. The emotional demand drops. But the connection to the language stays alive. We stay in orbit even when we can't maintain altitude.

This is profoundly different from a rest day. It's the difference between a runner taking a day off and a runner switching to a gentle walk through a neighborhood they love. The body is still moving. The relationship with movement stays intact.

The Ethics We Need to Talk About

We should be honest: emotion-sensing technology raises real questions.

Who owns the emotional data? How long is it stored? Can it be used for purposes beyond lesson adaptation — say, marketing, or insurance profiling? What happens when the AI misreads a cultural communication style as disengagement?

These aren't hypothetical concerns. A 2026 position paper from the ACM Conference on Fairness, Accountability, and Transparency flagged significant bias in affect-detection models trained predominantly on Western English-speaking populations. Learners from cultures with different prosodic norms — tonal language speakers, for example — were disproportionately misclassified.

We need to demand better. Diverse training data. Transparent data policies. Learner control over what's collected and how it's used. The technology is powerful. That's exactly why the guardrails have to be built now, not after the next wave of adoption.

What This Means for Us in 2026 and Beyond

Let's return to that coffee conversation.

My friend didn't lack discipline. She didn't lack resources. She lacked a system that could see what she couldn't — that her emotional fuel tank had been emptying for weeks, and that by the time she noticed, it was already on zero.

In 2026, we're watching the emergence of AI systems that treat us as whole humans, not just answer-generating machines. Systems that understand language learning burnout as an emotional event, not a knowledge gap. Systems that adapt not just to what we know, but to how we feel about knowing it.

This doesn't replace the human elements — the patient teacher, the encouraging study partner, the community that cheers us on. It extends them. It fills the gaps between human touchpoints with something that resembles, if imperfectly, genuine attentiveness.

We're not there completely. The models are imperfect. The ethics are still being negotiated. The research is moving faster than the implementation.

But for the first time, the question isn't "why do so many people quit learning languages?" The question is: "what if the right intervention, at the right moment, could have kept them going?"

We think that question is worth everything. At LingoTalk, it's the question behind every feature we build — because we believe the learner who almost quit but didn't is the one who eventually becomes fluent.

So if you've felt that slow fade recently — the creeping indifference, the sessions that feel like obligations — know this: it's not weakness. It's a signal. And increasingly, the tools we're building are learning to hear it before we do.

Stay in orbit. We'll figure the rest out together.

Ready to speak a new language with confidence?