When Your AI Language Tutor Is Wrong: How to Spot AI Hallucinations That Could Ruin Your Fluency in 2026

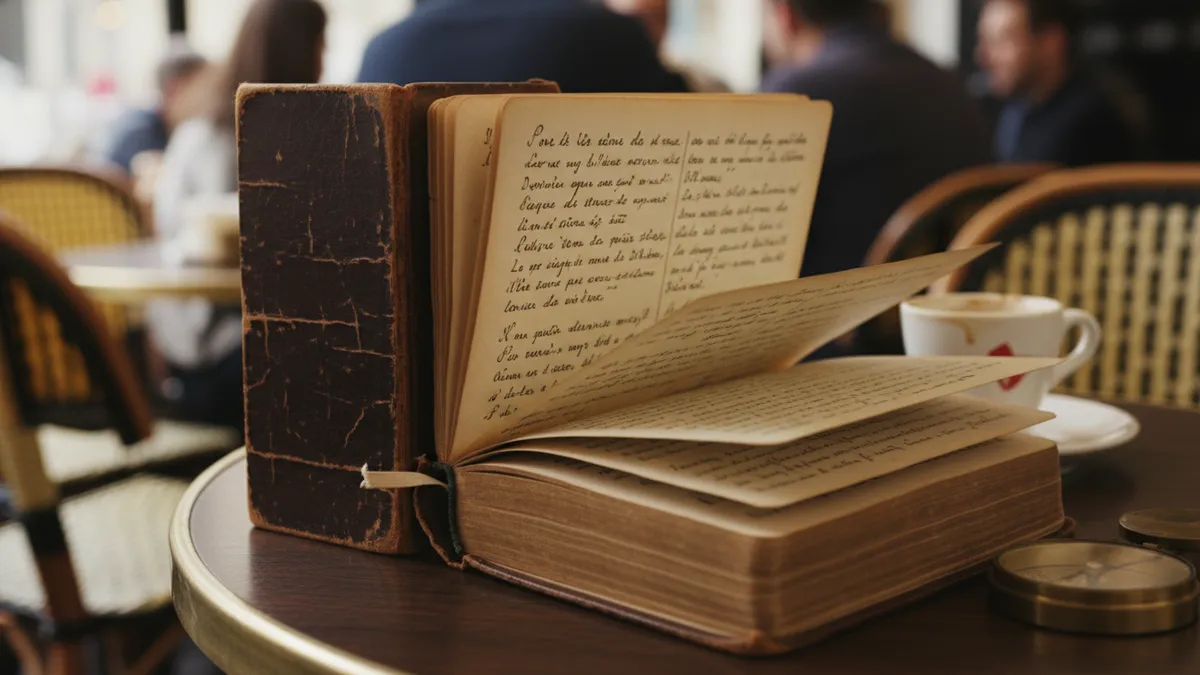

Picture this: we're sitting in a café in Lyon, confidently ordering in French, weaving in a colorful idiom we picked up from our AI tutor last month. The waiter pauses. His eyebrow lifts. He doesn't laugh — it's worse than that. He looks genuinely confused. The phrase we used with such pride? It doesn't exist. Our AI language tutor invented it, delivered it to us with total confidence, and we never questioned it.

That scene is playing out thousands of times a day in 2026, and most of us don't even realize it's happening.

AI hallucinations — when language models generate information that sounds authoritative but is completely fabricated — have become one of the most talked-about issues in technology this year. But here's what almost nobody is discussing: what happens when those hallucinations slip into our language learning? When the wrong grammar rule, the fake idiom, or the nonexistent word gets absorbed into our practice routine and quietly fossilizes into a habit we carry for years?

Let's rewind. Let's talk about what's actually going wrong, why it's so hard to catch, and how we protect ourselves.

How AI Language Learning Hallucinations Actually Work

The broader AI landscape in 2026 runs on large language models that predict the next most likely word in a sequence. That prediction engine works brilliantly for generating natural-sounding text. It works less brilliantly for guaranteeing factual accuracy. And it can outright fail when the task is teaching the specific, rule-governed structures of a foreign language.

Here's the core problem: these models don't know Spanish or Mandarin or German. They pattern-match from enormous datasets. When the data is thin — say, a less common grammatical construction in Turkish, or a regional dialect in Portuguese — the model fills in the gap. It guesses. And it guesses with the same calm, authoritative tone it uses when it's absolutely right.

That's the hallucination. Not a glitch. Not a bug report waiting to happen. A fundamental feature of how generative AI works, wearing the mask of expertise.

Real Examples: When AI Tutors Teach Wrong Grammar and Fake Phrases

We've been collecting examples throughout 2025 and into 2026. The patterns are striking — and honestly, a little alarming.

Invented idioms that sound perfectly plausible. One popular AI tutor app taught a user the German expression "den Fisch ins Fenster werfen" (to throw the fish into the window), claiming it means to waste an opportunity. Native German speakers have never heard it. The AI stitched together German words into something that felt idiomatic. It followed the shape of real German idioms. It just wasn't one.

Confident but incorrect grammar rules. We've seen AI tutors explain that in Japanese, the particle は (wa) can never appear twice in a single sentence. That sounds reasonable. It's tidy. It's also wrong — double-wa constructions exist and serve specific emphatic functions. The AI simplified a nuanced rule into a false absolute.

Nonexistent vocabulary presented as standard. A French learning session generated the word "éclairitude" as a synonym for clarity. It follows French morphological patterns perfectly. It sounds beautiful. It is not a word.

These aren't edge cases. These are the kinds of AI tutor errors happening daily across mainstream apps in 2026. And the scariest part? Each one arrived wrapped in a confident explanation.

Why AI Language Learning Mistakes Are So Dangerous for Fluency

Now we start to see why this matters more than we initially thought.

Language learning is cumulative. Every word we practice, every grammar pattern we internalize, becomes part of the architecture of our fluency. Linguists call it fossilization — when an error gets repeated so many times it becomes permanent, resistant even to correction.

A wrong fact in a history chatbot? We'll encounter the correction eventually. A wrong idiom baked into our active vocabulary? That can live in our speech for decades.

Consider the stakes across different levels. A beginner might absorb a false conjugation rule and build hundreds of future sentences on a cracked foundation. An intermediate learner might adopt a hallucinated idiom and deploy it in a job interview, a date, a client meeting. An advanced learner might trust a subtle AI-generated grammar nuance that shifts their register in ways native speakers find strange but can't quite pinpoint.

The higher we climb, the more the errors matter. The more they hide.

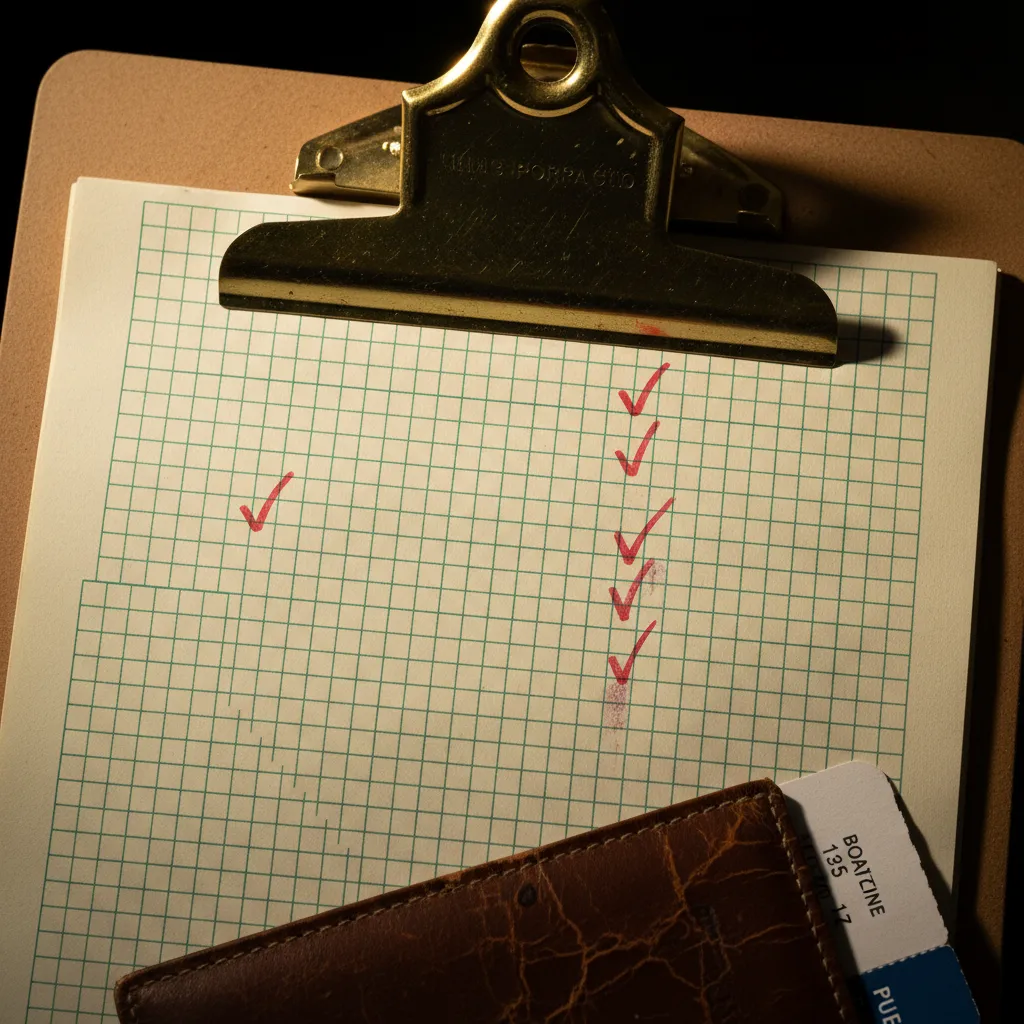

The Checklist: How to Spot AI Hallucinations Before They Stick

So what do we actually do? We can't fact-check every single sentence our AI tutor generates — that would defeat the purpose of having a tutor. But we can develop a sharp eye for the warning signs.

Here's the practical checklist we've built from studying hundreds of AI tutor errors:

1. Watch for Over-Confident Absolutes

When our AI tutor says "always" or "never" about a grammar rule, that's our cue to pause. Languages are messy. Rules have exceptions. If the explanation feels too clean, too simple, too satisfying — it might be a hallucination smoothing over real complexity.

2. Cross-Reference New Idioms and Expressions

Any idiom or set phrase we learn from AI deserves a quick search. Plug it into a native-language corpus, a dictionary, or a forum where native speakers gather. If we can't find independent evidence that real humans use this expression, we probably shouldn't either.

3. Be Extra Skeptical at the Edges

AI language app accuracy tends to be highest for common, well-documented patterns in major languages. The moment we venture into slang, regional expressions, formal registers, or less commonly studied languages, the hallucination risk spikes. That's where we slow down and verify.

4. Test Explanations, Not Just Answers

Ask the AI why a grammar rule works the way it does. Then ask it to show five example sentences. Hallucinated rules tend to wobble under pressure — the explanation might contradict itself, or the examples might subtly violate the rule the AI just stated.

5. Look for the "Too Perfect" Pattern

Real languages have irregular verbs, exceptions, and constructions that exist simply because they evolved that way. If every example the AI provides is symmetrical and elegant, we might be looking at a pattern the model invented rather than one the language actually contains.

Why Some AI Language Tools Get It Wrong More Than Others

Not all AI tutors are equally prone to hallucination. The difference comes down to architecture — specifically, whether the system has guardrails designed for language teaching or whether it's just a general-purpose chatbot wearing a tutor costume.

General-purpose AI models are brilliant conversationalists. They can chat about grammar, role-play ordering coffee in Italian, and explain subjunctive mood with charm. But they lack the structured verification layer that catches fabricated content before it reaches us.

This is something we think about constantly at LingoTalk. Our approach layers AI generation with linguistic validation — cross-referencing generated content against verified corpora, grammatical rule databases, and native-speaker-reviewed materials. It's not a perfect system. No system is, and anyone who claims otherwise is selling something. But it's a fundamentally different architecture than simply letting a language model improvise.

The question isn't whether we should use AI for language learning in 2026. We absolutely should — the conversational practice, the personalization, the availability are transformative. The question is whether our AI tool has accuracy safeguards built into its bones, or whether we're flying without a net.

Building a Verification Habit That Doesn't Slow Us Down

Here's where we pull it all together.

The goal isn't to become paranoid about every sentence our AI tutor produces. That would kill the joy, the flow, the momentum that makes AI language learning so powerful. The goal is to build a lightweight verification habit — something that takes seconds, not minutes, and becomes second nature.

Think of it like driving. We don't consciously analyze every car on the road. But we've trained ourselves to notice when something feels off — a car drifting, a brake light pattern that signals trouble. We can develop the same instinct for AI-generated language content.

Start small. Pick one session per week where we actively fact-check three things our tutor taught us. Over time, our internal radar sharpens. We start feeling when something sounds too smooth, too invented, too convenient. That instinct becomes our most reliable AI language learning tool.

The Fluency We Build Deserves a Solid Foundation

Let's zoom back out. Across the globe in 2026, millions of us are using AI to learn languages in ways that would have seemed like science fiction five years ago. We're having real-time conversations with AI partners. We're getting personalized grammar corrections. We're building fluency faster than any previous generation of learners.

That progress is real. That progress is extraordinary.

But it's built on trust — trust that the information we're absorbing is accurate. And in a world where AI can hallucinate with perfect confidence, that trust needs to be earned, verified, and reinforced.

We owe it to our future selves — the ones sitting in that café in Lyon, ordering with genuine fluency — to make sure every phrase we've learned is one that actually exists. Every grammar rule holds up under scrutiny. Every word we carry into conversation is one a native speaker would recognize.

That's the standard. Let's hold our tools to it — and let's hold ourselves to the habit of checking.

At LingoTalk, we're building with that standard in mind. But regardless of which tool we choose, the most powerful safeguard is an informed learner. Now we know what to watch for. Now we build fluency on solid ground.

Ready to speak a new language with confidence?